Here’s a timely guest entry from Jeffrey Witmer (Oberlin College).

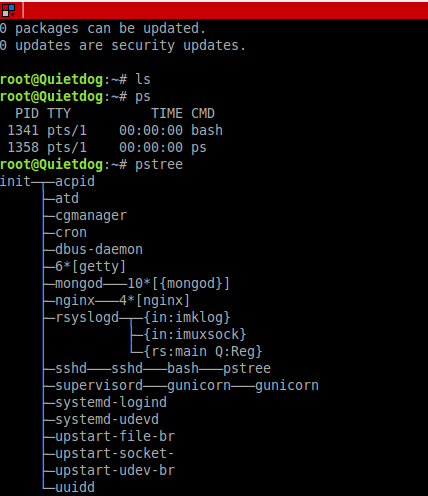

As the “Deflate Gate” saga was unfolding, Warren Sharp analyzed “touches per fumble” for NFL teams before and after 2006, when a rule was changed so that teams playing on the road could provide their own footballs (http://www.sharpfootballanalysis.com/blog/). Sharp noted that, for whatever reason, the Patriots went from being a typical team, as regards fumbling, to a team with a very low fumble rate. Rather than rely on the data the Sharp provides at his website, I choose to collect and analyze some data on my own. I took a random sample of 30 games played by New England and 30 other games. For each game, I recorded all rushing and passing plays (except for QB kneels), but excluded kicking plays (the NFL, rather than the teams, provides special footballs for those plays). (Data source: http://www.pro-football-reference.com/play-index/play_finder.cgi.) I also recorded the weather for the game. (Data source: http://www.wunderground.com/history/.) Once I had the data (in a file that I called AllBig, which can be downloaded from http://www.amherst.edu/~nhorton/AllBig.csv), I noted whether or not there was a fumble on each play, aided by the grep() command:

grep("Fumb", AllBig$Detail, ignore.case=TRUE)

I labeled each play as Late or not according to whether it happened after the rule change:

AllBig$Late 2006, 1, 0)

Now for the analysis. My data set has 7558 plays including 145 fumbles (1.9%). I used the mosaic package and the tally() command to see how often teams other than the Patriots fumble:

require(mosaic)

tally(~Fumble+Late, data=filter(AllBig,Pats==0))

Late

Fumble 0 1

0 2588 2919

1 54 65

Then I asked for the data in proportion terms:

tally(Fumble~Late, data=filter(AllBig,Pats==0))

and got

Late

Fumble 0 1

0 0.9796 0.9782

1 0.0204 0.0218

For non-Pats there is a tiny increase in fumbles. This can be displayed graphically using a mosaiplot (though it’s not a particularly compelling figure). mosaicplot(Fumble~Late, data=filter(AllBig,Pats==0)) Repeating this for the Patriots shows a different picture:

tally(~Fumble+Late, data=filter(AllBig,Pats==1))

Late

Fumble 0 1

0 996 910

1 19 7

tally(Fumble~Late, data=filter(AllBig,Pats==1))

Late

Fumble 0 1

0 0.98128 0.99237

1 0.01872 0.00763

I fit a logistic regression model with the glm() command: glm(Fumble~Late*Pats, family=binomial, data=AllBig)

Coefficients:

Estimate Std. Error z value Pr(>|z|)

(Intercept) -3.8697 0.1375 -28.14 Late 0.0650 0.1861 0.35 0.727

Pats -0.0897 0.2693 -0.33 0.739

Late:Pats -0.9733 0.4819 -2.02 0.043 *

I wanted to control for any weather effect, so I coded the weather as Bad if it was raining or snowing and good if not. This led to a model that includes BadWeather and Temperature – which turn out not to make much of a difference:

AllBig$BadWeather

glm(formula = Fumble ~ BadWeather + Temp + Late * Pats,

family = binomial, data = AllBig)

Coefficients:

Estimate Std. Error z value Pr(>|z|)

(Intercept) -4.23344 0.43164 -9.81 BadWeather 0.33259 0.29483 1.13 0.26

Temp 0.00512 0.00612 0.84 0.40

Late 0.08871 0.18750 0.47 0.64

Pats -0.14183 0.27536 -0.52 0.61

Late:Pats -0.91062 0.48481 -1.88 0.06 .

Because there was suspicion that something changed starting in 2007 I added a three-way interaction:

glm(formula = Fumble ~ BadWeather + Temp + IsAway * Late * Pats,

family = binomial, data = AllBig)

Coefficients:

Estimate Std. Error z value Pr(>|z|)

(Intercept) -4.51110 0.47707 -9.46 BadWeather 0.34207 0.30013 1.14 0.254

Temp 0.00831 0.00653 1.27 0.203

IsAway 0.14791 0.27549 0.54 0.591

Late 0.13111 0.26411 0.50 0.620

Pats -0.80019 0.54360 -1.47 0.141

IsAway:Late -0.07348 0.37463 -0.20 0.845

IsAway:Pats 0.94335 0.63180 1.49 0.135

Late:Pats 0.51536 0.71379 0.72 0.470

IsAway:Late:Pats -3.14345 1.29480 -2.43 0.015 *

There is some evidence here that the Patriots fumble less than the rest of the NFL and that things changed in 2007. The p-values above are based on asymptotic normality, but there is a cleaner and easier way to think about the Patriots’ fumble rate. I wrote a short simulation that mimics something I do in my statistics classes, where I use a physical deck of cards to show what each step in the R simulation is doing.

#Simulation of deflategate data null hypothesis

Late = rep(1,72) #creates 72 late fumbles

Early = rep(0,73) #creates 73 early fumbles

alldata = append(Late,Early) #puts the two groups together

table(alldata) #check to see that we have what we want

cards =1:length(alldata) # creates 145 cards, one "ID number" per fumble

FumbleLate = NULL # initializes a vector to hold the results

for (i in 1:10000){# starts a loop that will be executed 10,000 times

cardsgroup1 = sample(cards,119, replace=FALSE) # takes a sample of 119 cards

cardsgroup2 = cards[-cardsgroup1] # puts the remaining cards in group 2

NEPats = (alldata[cardsgroup2]) #reads the values of the cards in group 2

FumbleLate[i] = sum(NEPats) # counts NEPats late fumbles (the only stat we need)

}

table(FumbleLate) #look at the results

hist(FumbleLate, breaks=seq(2.5,23.5)) #graph the results

sum(FumbleLate

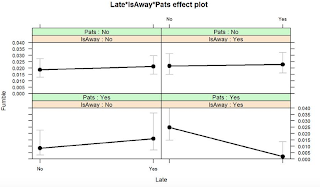

Additional note: kudos to Steve Taylor for the following graphical depiction of the interaction.

An unrelated note about aggregators:We love aggregators! Aggregators collect blogs that have similar coverage for the convenience of readers, and for blog authors they offer a way to reach new audiences. SAS and R is aggregated by R-bloggers, PROC-X, and statsblogs with our permission, and by at least 2 other aggregating services which have never contacted us. If you read this on an aggregator that does not credit the blogs it incorporates, please come visit us at SAS and R. We answer comments there and offer direct subscriptions if you like our content. In addition, no one is allowed to profit by this work under our license; if you see advertisements on this page, the aggregator is violating the terms by which we publish our work.

Read more →

.png)